Hello,

In today’s issue of Recurrent Neural Notes, I’ll explore the limits of Artificial Intelligence, particularly focusing on the computational capabilities of neural networks, answering the common question of whether there’s a ceiling to what AI can achieve.

This issue is a shorter version of a longer article I wrote on the topic on Medium. For those keen on delving deeper, I encourage you to read the original article!

If this issue sparks your interest, don’t forget to subscribe!

Are there limits to what AI can do?

Given the almost daily breakthroughs in the field, this is a question you might come across with the same frequency. In Computer Science, such a question is often analysed through the lens of computation theory - let’s look where neural networks - the backbone of modern AI - stand in this context.

Theory of Computation

Computation is about processing information. Operationally, this means following well-defined rules - rules that are clear, unambiguous and that can be described in a computational model. There have been many formal definitions of what this means precisely, but by far the most popular is the one introduced by Alan Turing in 1936 in his paper “On Computable Numbers, with an Application to the Entscheidungsproblem,” called Turing computability. According to this definition, a statement is well-defined if it can be expressed in terms of the initialization parameters of a Turing Machine - a theoretical model of computation capable of simulating any algorithm’s logic.

Turing Machine and Models of Computation

For the purposes of this issue, it’s enough to know that the Turing Machine consists of an infinite tape acting as a memory, a head that reads and writes data on the tape (i.e. carries out the computation), and a set of rules that define the next operation based on the current state and tape symbol. It turns out that this relatively simple idea is powerful enough to compute anything computable - if a problem is computable, a Turing Machine can solve it.

Naturally, the Turing Machine isn’t the only model of computation - other popular models include Finite State Machines, cellular automata, register machines, and importantly for us, digital logic gates.

Computing with Digital Logic Gates

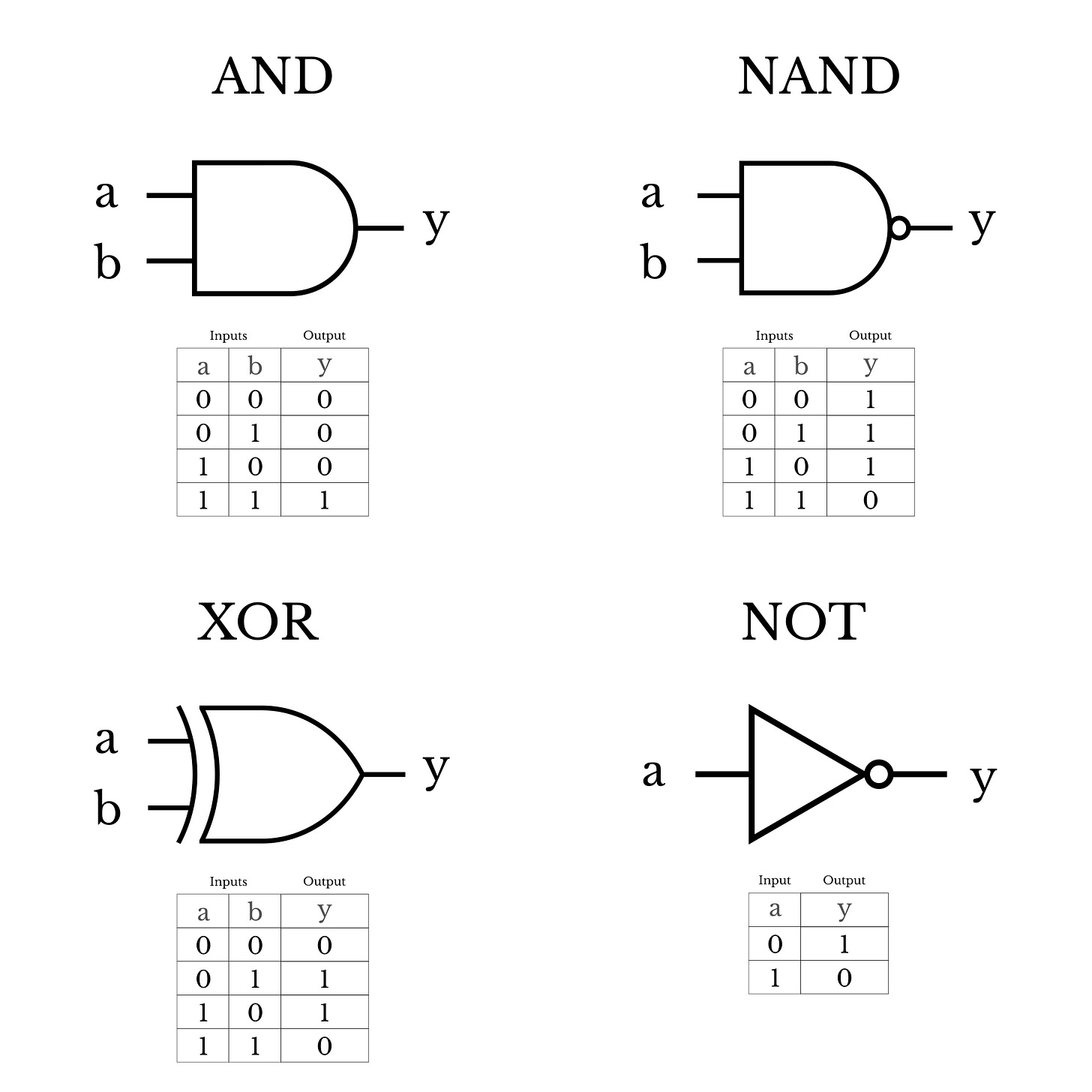

Digital logic gates are the basis of many electronic circuits, including the processor of a computer. They compute a logical function using binary inputs to produce binary output. You are likely familiar with logical AND (which outputs a 1 only if all inputs are 1), NOT (which inverts the inputs), or XOR (which outputs a 1 if the inputs are different). Here are some examples, together with the table of their inputs and output:

Importantly, they are Turing-complete - as a model of computation, they are as powerful as Turing Machines, meaning they can compute anything that can be computed.

Especially interesting is the NAND gate (NOT + AND), which outputs a 0 only if all inputs are one. It’s an example of a universal gate - using a combination of only NAND gates, you can simulate the functionality of any other gate!

I find this particularly interesting - a gate so simple a child can understand it, able to build any other gate, and by extension any circuit. Not only that - by being able to simulate any other gate, NAND gates themselves are Turing-complete - they are capable of computing anything computable!

How does all this relate to neural networks? Let’s see.

Neural Networks as a Model of Computation

Clearly, neural networks perform some kind of computation - this brings us back to our original question: How powerful are they? Are they Turing-complete, i.e. as good as it gets, or are there limits to what they can do, computationally? How can we tell? Turns out the answer is surprisingly straightforward.

First, let’s focus on the basic building block of neural networks - a single neuron. It computes a weighted sum of its inputs and passes it through an activation to produce an output. This sounds a lot like logic gates - receive some inputs, transform them, and produce an output. Does this mean neurons (and by extension, neural networks), are computationally equivalent to digital logic gates?

And indeed! We can learn such weights (+ bias) that the neuron essentially simulates a NAND gate. Here’s an example:

This means neurons are Turing-complete. They can simulate any digital logic circuit and compute any computable function.

Bittersweet Reality

While the analogy of neurons to NAND gates provides a fascinating theoretical foundation for the Turing-completeness of neural networks, it’s important to clarify that this isn’t the typical approach taken in practical AI development.

Furthermore, it doesn’t translate into the ability to solve all computable problems efficiently - we still face issues related to data quantity & quality, training complexity, resource constraints and modelling challenges.

In conclusion, while neurons and neural networks embody a remarkable computational potential that aligns with the most foundational concepts of computer science, realizing this potential in practice requires ongoing research and innovation — and the sky is the limit!

If you enjoyed this issue and want to learn more, check out the original Medium article, and consider subscribing!

Thank you for reading,

Matej Hladky | Recurrent Neural Notes